Stored program architecture

The defining feature of modern computers which distinguishes them from all other machines is that they can be

programmed. That is to say that a list of

instructions (the

program) can be given to the computer and it will store them and carry them out at some time in the future.

In most cases, computer instructions are simple: add one number to another, move some data from one location to another, send a message to some external device, etc. These instructions are read from the computer's

memory and are generally carried out (

executed) in the order they were given. However, there are usually specialized instructions to tell the computer to jump ahead or backwards to some other place in the program and to carry on executing from there. These are called "jump" instructions (or

branches). Furthermore, jump instructions may be made to happen

conditionally so that different sequences of instructions may be used depending on the result of some previous calculation or some external event. Many computers directly support

subroutines by providing a type of jump that "remembers" the location it jumped from and another instruction to return to the instruction following that jump instruction.

Program execution might be likened to reading a book. While a person will normally read each word and line in sequence, they may at times jump back to an earlier place in the text or skip sections that are not of interest. Similarly, a computer may sometimes go back and repeat the instructions in some section of the program over and over again until some internal condition is met. This is called the

flow of control within the program and it is what allows the computer to perform tasks repeatedly without human intervention.

Comparatively, a person using a

pocket calculator can perform a basic arithmetic operation such as adding two numbers with just a few button presses. But to add together all of the numbers from 1 to 1,000 would take thousands of button presses and a lot of time—with a near certainty of making a mistake. On the other hand, a computer may be programmed to do this with just a few simple instructions. For example:

mov #0,sum ; set sum to 0

mov #1,num ; set num to 1

loop: add num,sum ; add num to sum

add #1,num ; add 1 to num

cmp num,#1000 ; compare num to 1000

ble loop ; if num <= 1000, go back to 'loop'

halt ; end of program. stop running

Once told to run this program, the computer will perform the repetitive addition task without further human intervention. It will almost never make a mistake and a modern PC can complete the task in about a millionth of a second.

[16]

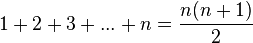

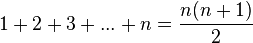

However, computers cannot "think" for themselves in the sense that they only solve problems in exactly the way they are programmed to. An intelligent human faced with the above addition task might soon realize that instead of actually adding up all the numbers one can simply use the equation

and arrive at the correct answer (500,500) with little work.

[17] In other words, a computer programmed to add up the numbers one by one as in the example above would do exactly that without regard to efficiency or alternative solutions.

Programs

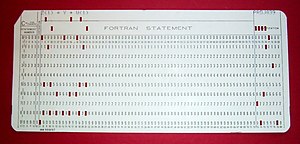

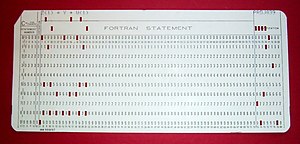

A 1970s

punched card containing one line from a

FORTRAN program. The card reads: "Z(1) = Y + W(1)" and is labelled "PROJ039" for identification purposes.

In practical terms, a

computer program may run from just a few instructions to many millions of instructions, as in a program for a

word processor or a

web browser. A typical modern computer can execute billions of instructions per second (

gigahertz or GHz) and rarely make a mistake over many years of operation. Large computer programs consisting of several million instructions may take teams of

programmers years to write, and due to the complexity of the task almost certainly contain errors.

Errors in computer programs are called "

bugs". Bugs may be benign and not affect the usefulness of the program, or have only subtle effects. But in some cases they may cause the program to "

hang"—become unresponsive to input such as

mouse clicks or keystrokes, or to completely fail or "

crash". Otherwise benign bugs may sometimes may be harnessed for malicious intent by an unscrupulous user writing an "

exploit"—code designed to take advantage of a bug and disrupt a program's proper execution. Bugs are usually not the fault of the computer. Since computers merely execute the instructions they are given, bugs are nearly always the result of programmer error or an oversight made in the program's design.

[18]

In most computers, individual instructions are stored as

machine code with each instruction being given a unique number (its operation code or

opcode for short). The command to add two numbers together would have one opcode, the command to multiply them would have a different opcode and so on. The simplest computers are able to perform any of a handful of different instructions; the more complex computers have several hundred to choose from—each with a unique numerical code. Since the computer's memory is able to store numbers, it can also store the instruction codes. This leads to the important fact that entire programs (which are just lists of instructions) can be represented as lists of numbers and can themselves be manipulated inside the computer just as if they were numeric data. The fundamental concept of storing programs in the computer's memory alongside the data they operate on is the crux of the von Neumann, or stored program, architecture. In some cases, a computer might store some or all of its program in memory that is kept separate from the data it operates on. This is called the

Harvard architecture after the

Harvard Mark I computer. Modern von Neumann computers display some traits of the Harvard architecture in their designs, such as in

CPU caches.

While it is possible to write computer programs as long lists of numbers (

machine language) and this technique was used with many early computers,

[19] it is extremely tedious to do so in practice, especially for complicated programs. Instead, each basic instruction can be given a short name that is indicative of its function and easy to remember—a

mnemonic such as ADD, SUB, MULT or JUMP. These mnemonics are collectively known as a computer's

assembly language. Converting programs written in assembly language into something the computer can actually understand (machine language) is usually done by a computer program called an assembler. Machine languages and the assembly languages that represent them (collectively termed

low-level programming languages) tend to be unique to a particular type of computer. For instance, an

ARM architecture computer (such as may be found in a

PDA or a

hand-held videogame) cannot understand the machine language of an

Intel Pentium or the

AMD Athlon 64 computer that might be in a

PC.

[20]

Though considerably easier than in machine language, writing long programs in assembly language is often difficult and error prone. Therefore, most complicated programs are written in more abstract

high-level programming languages that are able to express the needs of the

programmer more conveniently (and thereby help reduce programmer error). High level languages are usually "compiled" into machine language (or sometimes into assembly language and then into machine language) using another computer program called a

compiler.

[21] Since high level languages are more abstract than assembly language, it is possible to use different compilers to translate the same high level language program into the machine language of many different types of computer. This is part of the means by which software like video games may be made available for different computer architectures such as personal computers and various

video game consoles.

The task of developing large

software systems presents a significant intellectual challenge. Producing software with an acceptably high reliability within a predictable schedule and budget has historically been difficult; the academic and professional discipline of

software engineering concentrates specifically on this challenge.

Example

A traffic light showing red

Suppose a computer is being employed to drive a

traffic light at an intersection between two streets. The computer has the following three basic instructions.

- ON(Streetname, Color) Turns the light on Streetname with a specified Color on.

- OFF(Streetname, Color) Turns the light on Streetname with a specified Color off.

- WAIT(Seconds) Waits a specifed number of seconds.

- START Starts the program

- REPEAT Tells the computer to repeat a specified part of the program in a loop.

Comments are marked with a // on the left margin. Comments in a computer program do not affect the operation of the program. They are not evaluated by the computer. Assume the streetnames are Broadway and Main.

START

//Let Broadway traffic go

OFF(Broadway, Red)

ON(Broadway, Green)

WAIT(60 seconds)

//Stop Broadway traffic

OFF(Broadway, Green)

ON(Broadway, Yellow)

WAIT(3 seconds)

OFF(Broadway, Yellow)

ON(Broadway, Red)

//Let Main traffic go

OFF(Main, Red)

ON(Main, Green)

WAIT(60 seconds)

//Stop Main traffic

OFF(Main, Green)

ON(Main, Yellow)

WAIT(3 seconds)

OFF(Main, Yellow)

ON(Main, Red)

//Tell computer to continuously repeat the program.

REPEAT ALL

With this set of instructions, the computer would cycle the light continually through red, green, yellow and back to red again on both streets.

However, suppose there is a simple on/off

switch connected to the computer that is intended to be used to make the light flash red while some maintenance operation is being performed. The program might then instruct the computer to:

START

IF Switch == OFF then: //Normal traffic signal operation

{

//Let Broadway traffic go

OFF(Broadway, Red)

ON(Broadway, Green)

WAIT(60 seconds)

//Stop Broadway traffic

OFF(Broadway, Green)

ON(Broadway, Yellow)

WAIT(3 seconds)

OFF(Broadway, Yellow)

ON(Broadway, Red)

//Let Main traffic go

OFF(Main, Red)

ON(Main, Green)

WAIT(60 seconds)

//Stop Main traffic

OFF(Main, Green)

ON(Main, Yellow)

WAIT(3 seconds)

OFF(Main, Yellow)

ON(Main, Red)

//Tell the computer to repeat this section continuously.

REPEAT THIS SECTION

}

IF Switch == ON THEN: //Maintenance Mode

{

//Turn the red lights on and wait 1 second.

ON(Broadway, Red)

ON(Main, Red)

WAIT(1 second)

//Turn the red lights off and wait 1 second.

OFF(Broadway, Red)

OFF(Main, Red)

WAIT(1 second)

//Tell the comptuer to repeat the statements in this section.

REPEAT THIS SECTION

}

In this manner, the traffic signal will run a flash-red program when the switch is on, and will run the normal program when the switch is off. Both of these program examples show the basic layout of a computer program in a simple, familiar context of a traffic signal. Any experienced programmer can spot many

software bugs in the program, for instance, not making sure that the green light is off when the switch is set to flash red. However, to remove all possible bugs would make this program much longer and more complicated, and would be confusing to nontechnical readers: the aim of this example is a simple demonstration of how computer instructions are laid out.